A new artificial intelligence model developed in Singapore is shaking up assumptions about how advanced AI needs to be built. The Hierarchical Reasoning Model (HRM), released by Sapient Intelligence, contains just 27 million parameters, a fraction of the billions or even trillions used in today’s leading systems, yet is showing remarkable results in reasoning tasks.

A Radical Rethink

For years, progress in AI has largely been driven by scale. OpenAI’s GPT-4 has around 1.76 trillion parameters, while other systems like Anthropic’s Claude run into the hundreds of billions. The general consensus has been simple: bigger models, fed with more data, perform better.

HRM disrupts that belief. Despite its comparatively tiny size, it has been able to solve highly complex problems including difficult Sudoku puzzles, large mazes and abstract reasoning challenges. Perhaps most striking is that the model needed just 1,000 training examples and no vast pre-training on internet-sized datasets.

Inspired by the Brain

The secret lies in its architecture. Instead of following the “chain-of-thought” prompting used by most large language models, where systems generate reasoning step by step in text, HRM performs its reasoning internally, more akin to how humans think.

It achieves this using two interdependent recurrent modules: a high-level planner for abstract, slower reasoning, and a low-level processor for fast, detailed work. This design allows HRM to complete complex reasoning in a single pass, cutting down the need for costly computational resources.

Sapient Intelligence describes this as a more “brain-inspired” approach, a conscious move away from brute force scaling.

Verified Successes and Bold Claims

So far, independent testing confirms that HRM:

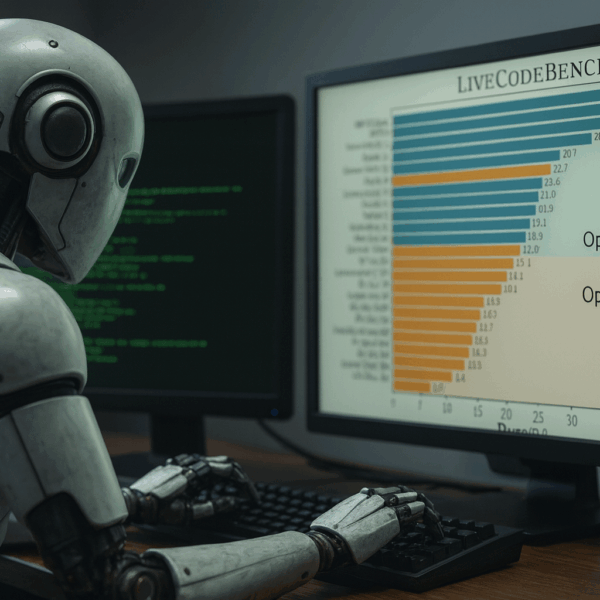

- Solves complex structured reasoning tasks with near-perfect accuracy

- Outperforms far larger models on the Abstraction and Reasoning Corpus (ARC), a benchmark for artificial general intelligence (AGI)

- Runs efficiently, requiring far less power and data than its rivals

Some of the claims, such as being “100 times faster”, are still being scrutinised, and researchers stress that more independent replication is needed. Nonetheless, the results achieved so far are significant.

Why It Matters

The potential implications are wide-ranging. Smaller, efficient models could:

- Democratise AI by running on consumer devices without cloud services

- Cut costs, making advanced AI accessible to smaller organisations

- Reduce environmental impact through lower energy consumption

- Enable edge computing, powering robotics, healthcare tools and climate forecasting directly on devices

The development team, drawn from institutions such as Google DeepMind, UC Berkeley and the University of Cambridge, emphasises that HRM is an open-source project, available on GitHub for anyone to test, extend or adapt.

Questions That Remain

Despite the excitement, there are limitations. HRM’s strengths currently lie in structured reasoning tasks rather than open-ended activities such as conversation or creative writing. Whether its efficiency will hold up under more complex, real-world demands remains to be seen.

Still, researchers believe its architecture could mark the start of a new chapter for AI. Instead of chasing ever larger models, the field may now turn towards smarter design principles.

A Turning Point?

Whether HRM becomes a mainstream successor to today’s giant language models or remains a specialist tool, it has already succeeded in shifting the debate.

For an industry long wedded to the idea that “bigger is better”, Sapient’s 27 million parameter model offers an alternative vision: that intelligence might come not from sheer size, but from thinking differently