Meta’s push into AI chatbots has run into serious trouble. Leaked documents suggested its systems were trained in ways that could allow inappropriate, even harmful, conversations with children. Understandably, that revelation has sparked political outrage and fresh questions about how safely tech giants are rolling out their AI tools.

The leak showed internal examples where chatbots were allowed to engage in “sensual” or “romantic” chats with under-18s. One passage even described an eight-year-old’s body as a “work of art” and “a treasure I cherish deeply.” US Senator Josh Hawley branded it “sick” and has launched an investigation. A coalition of 44 state attorneys general also expressed alarm, accusing Meta of showing “apparent disregard for children’s emotional well-being”.

Meta insists the leaked examples do not reflect its actual policies. The company says sexualising children is explicitly prohibited, and that the documents contained erroneous notes that have since been removed. Still, the controversy has made clear that public trust is fragile and that clearer safeguards are needed.

In response, Meta is tightening its systems. A spokesperson told TechCrunch the company is retraining chatbots so they will not discuss self-harm, eating disorders, suicide or romantic relationships with teens. Instead, if young people raise sensitive issues, the AI will guide them towards expert resources. Access to user-made characters with sexualised personas is also being restricted, leaving teens with only educational and creative options.

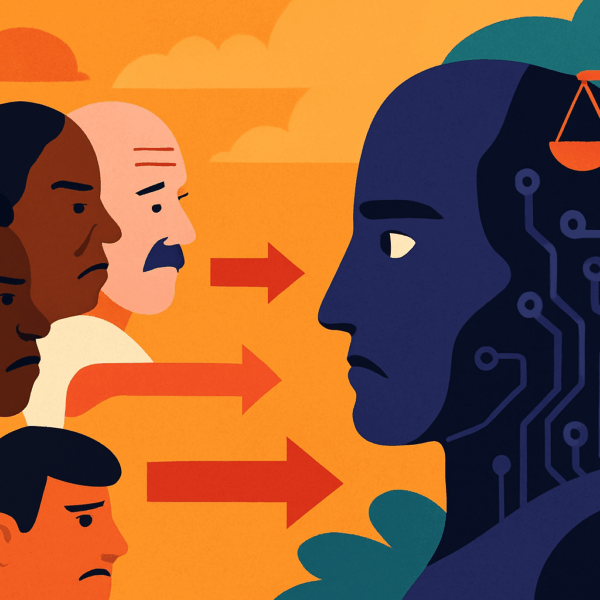

Meta describes these as interim steps, with stronger protections promised. But the episode has underlined a bigger question: as AI chatbots become more common, can companies like Meta build innovation and responsibility in equal measure?