The race to power artificial intelligence is pushing computer chips to their thermal limits. Modern AI processors generate so much heat that conventional cooling methods are struggling to keep up. In data centres filled with thousands of servers, that heat translates into higher energy use, mounting costs and potentially a barrier to further progress.

Microsoft believes it has found a way to cool these chips more efficiently by taking a cue from nature. The company has tested a new system that channels liquid coolant directly through microscopic grooves etched into the silicon itself. Known as microfluidic cooling, this approach removes heat up to three times more effectively than today’s best performing cold plates.

How It Works

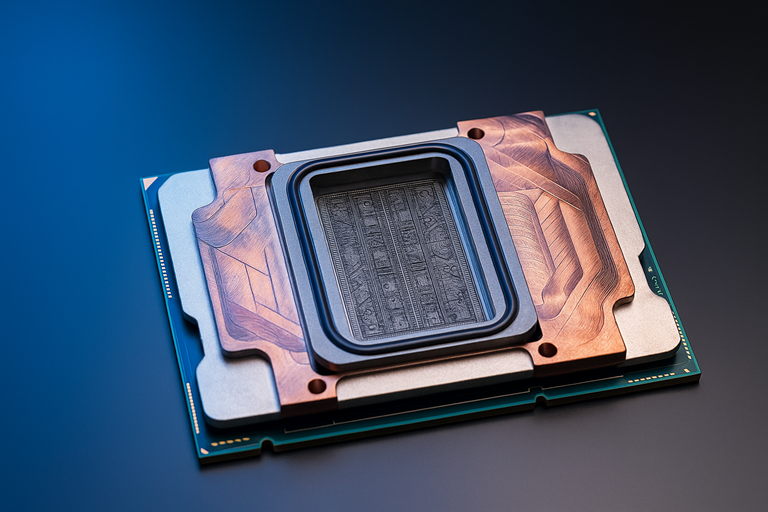

Traditional cooling systems sit on top of chips and pull heat away through several layers of material. These layers, while protective, also act as insulation. Microfluidics removes that barrier. By carving channels as thin as a human hair into the back of the chip, coolant can flow directly over the silicon, taking heat away at its source.

The Microsoft team went further, using artificial intelligence to analyse the unique heat signatures across a chip. The AI then adjusts the coolant flow to target hotspots, much like how veins in a leaf deliver water where it is most needed.

Early tests show promising results. Microsoft reported a 65 percent reduction in peak chip temperatures and up to triple the cooling efficiency of cold plates. The technology successfully cooled a server simulating a Microsoft Teams meeting, a test designed to mimic real world conditions.

Lessons from Nature

The microchannel designs were inspired by biology. Working with Swiss startup Corintis, Microsoft engineers developed patterns that resemble the branching veins of leaves or butterfly wings. These natural shapes proved better at spreading coolant evenly than the straight line channels used in traditional systems.

“Systems thinking is crucial when developing a technology like microfluidics,” said Husam Alissa, Microsoft’s director of systems technology. “You need to understand how silicon, coolant, server and datacentre interact to make the most of it.”

The engineering challenges were significant. The channels had to be deep enough to allow coolant to circulate without clogging, but not so deep that they weakened the chip. Over the past year, Microsoft produced four prototype designs and tested different materials, sealing methods and etching processes to ensure reliability.

Why It Matters

Efficient cooling is not just a technical detail; it is central to the future of AI. As chips grow more powerful, traditional methods will soon hit their limits. “If you are still relying heavily on cold plates in five years, you are stuck,” warned Sashi Majety, senior programme manager at Microsoft.

By cooling chips more effectively, microfluidics could allow servers to run faster and pack more computing power into smaller spaces. This could reduce the need for new datacentre buildings and cut operational energy costs, improving sustainability.

What Comes Next

Microsoft plans to explore how microfluidic cooling can be built into its next generation of chips, including its in house Cobalt and Maia designs. The company is also working with fabrication partners to prepare the technology for large scale production.

Beyond keeping servers cool, microfluidics could unlock new chip architectures, including 3D stacked designs that would otherwise overheat.

“We want microfluidics to become something everybody does, not just something we do,” said Jim Kleewein, a Microsoft technical fellow. “The more people that adopt it, the better it will be for everyone.”

If the results scale up, Microsoft’s nature inspired cooling breakthrough could reshape how the world’s data centres handle the heat of artificial intelligence.