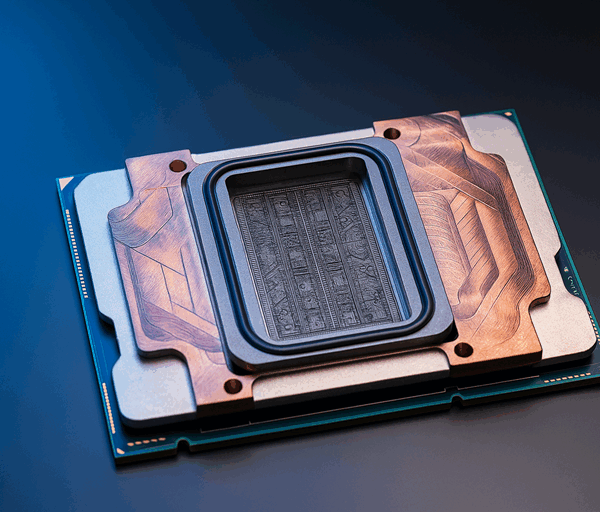

Anthropic has launched Project Glasswing, a new cyber security initiative built around its unreleased AI system Claude Mythos Preview, after saying the model is unusually capable of finding and exploiting software vulnerabilities. The company says the system has uncovered thousands of serious flaws, including weaknesses affecting major operating systems and web browsers, and has decided not to make the model generally available at this stage.

What is Anthropic Mythos?

According to Anthropic, Claude Mythos Preview is a frontier AI model with far stronger coding and security testing abilities than its previous systems. In its announcement, the company said the model had reached a level where AI could outperform almost all human experts at finding and sometimes exploiting software bugs. Anthropic also published benchmark results showing Mythos Preview outperforming Claude Opus 4.6 on several coding and cyber related tests, though those results come from the company’s own evaluations and should be understood in that context.

Why experts are worried

The concern is not simply that anthropic mythos can identify bugs. The greater fear is that a model with those abilities could also help attackers weaponise them more quickly. Anthropic says Mythos Preview found vulnerabilities in software that had survived years, and in some cases decades, of human review and automated testing. It has presented this as evidence that AI could sharply reduce the skill and effort needed to discover exploitable flaws, potentially increasing the pace and scale of cyber attacks.

Anthropic has disclosed several examples, including a long standing OpenBSD flaw, a vulnerability in FFmpeg, and a chain of Linux kernel issues that could allow a user to gain deeper control of a machine. The company says those specific issues were responsibly reported and patched before publication. However, many of the broader claims about the total number and severity of vulnerabilities found have not been independently verified in public.

Why banks were warned

Banks appear to have been singled out because financial institutions are part of the critical infrastructure that regulators most want to protect from a new generation of AI assisted cyber threats. Bloomberg first reported that US Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell called in senior bank executives this week to discuss the risks raised by Anthropic’s latest model. Reuters has also reported the meeting, saying officials wanted major lenders to be aware of possible future threats and to take precautions.

That does not mean Anthropic Mythos was shown to have targeted banks specifically. Rather, regulators appear to be treating the technology as a possible systemic risk because powerful AI tools could help malicious actors identify weaknesses in software used across the financial system. Anthropic itself has said the fallout from unsafe deployment of such capabilities could affect economies, public safety and national security.

A limited release

Instead of a public launch, Anthropic has restricted Mythos Preview to selected partners through Project Glasswing, including Amazon Web Services, Apple, Cisco, Google, Microsoft, Nvidia, Palo Alto Networks and JPMorganChase. Anthropic says the aim is to use the model for defensive work such as vulnerability detection and system hardening, while it develops stronger safeguards for future models.

At the moment, the story of anthropic mythos is less about a public product launch and more about a warning. Anthropic and regulators alike appear to believe that AI has reached a point where it could reshape cyber security, for better or worse.

The main corrections I made were to avoid stating Anthropic’s claims as independently proven facts, and to frame the bank meeting as reported by Bloomberg and Reuters rather than formally confirmed by all parties.